Calculate the errorterm for the network

The error value for the current output is calculated. The current optimal output must be given prior to calling this function.

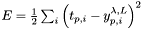

The formula used is  .

.

#include <PerceptronNetwork.h>

Public Member Functions | |

| PerceptronNetwork (vector< unsigned int > desc_layers, const char *network_name="unnamed", const ActivationFunction *fact=&ActivationFunctions::fact_tanh) | |

| ~PerceptronNetwork (void) | |

| PerceptronNetwork (PerceptronNetwork &source) | |

| void | setActivationFunction (PerceptronLayerType type, const ActivationFunction *fact) |

| void | save (fstream &fs) const |

| bool | load (fstream &fs) |

| void | randomizeParameters (const RandomFunction *weight_func, const RandomFunction *theta_func) |

| void | setInput (vector< double > &in) |

| void | setOptimalOutput (vector< double > &optimal) |

| vector< double > | getOutput (void) const |

| double | errorTerm (void) const |

| void | resetDiffs (void) |

| void | propagate (void) |

| void | backpropagate (void) |

| void | postprocess (void) |

| void | update (void) |

| void | dumpNetworkGraph (const char *filename) const |

| double | getLearningParameter (void) const |

| void | setLearningParameter (double epsilon) |

| double | getOptimalTolerance (void) const |

| void | setOptimalTolerance (double tolerance) |

| double | getWeightDecayParameter (void) const |

| void | setWeightDecayParameter (double factor) |

| double | getMomentumTermParameter (void) const |

| void | setMomentumTermParameter (double factor) |

Public Attributes | |

| const char * | name |

| vector< double > | input |

| vector< double > | output |

Protected Attributes | |

| vector< double > | output_optimal |

| vector< PerceptronLayer * > | layers |

| double | epsilon |

| double | opt_tolerance |

| double | weight_decay |

| double | momentum_term |

Friends | |

| class | WeightMatrix |

|

||||||||||||||||

|

PerceptronNetwork constructor Construct a multilayer perceptron network by layer description given through desc_layers. The vector size specifies the number of layers, the individual elements the number of neurons within its layer.

|

|

|

PerceptronNetwork destructor Remove all layers stored within the network. |

|

|

Copy constructor.

|

|

|

Backpropagation algorithm for the entire network. Calculate all delta error signals in every layer and their neurons. Every neuron must have a proper input/output signal assigned, and the output_optimal training target result is used for calculation. |

|

|

Dump whole neural network as graph file in the GraphViz file format (www.graphviz.org).

|

|

|

Calculate the errorterm for the network The error value for the current output is calculated. The current optimal output must be given prior to calling this function.

The formula used is |

|

|

Getter for the epsilon parameter. |

|

|

Getter for the momentum term parameter. |

|

|

Getter for the optimal tolerance parameter. |

|

|

Getter for the entire network output. |

|

|

Getter for the weight decay parameter. |

|

|

Load the entire network from stream fs.

|

|

|

Postprocess algorithm for the entire network. |

|

|

Propagation algorithm for the entire network. The input levels to the network have to be set using the setInput() method, before. Afterwards the output of the network can be obtained using the getOutput() method. |

|

||||||||||||

|

Randomize the variable network parameters, weightings and theta values.

|

|

|

Reset all learned parameters. Call after an update has been made. |

|

|

Save the entire network as text into the stream fs.

|

|

||||||||||||

|

Set the activation function for layers, based on their type.

|

|

|

Setter for the entire network input.

|

|

|

Setter for the epsilon parameter.

|

|

|

Setter for the momentum term parameter.

|

|

|

Setter for the optimal requested network output. Must be used before any learning algorithm is called (backpropagate).

|

|

|

Setter for the optimal tolerance parameter.

|

|

|

Setter for the weight decay parameter.

|

|

|

Update algorithm for the entire network. |

|

|

The GUI visualization class is declared friend to allow access to individual layers. |

|

|

Generic learn parameter epsilon, should be between 0.05 and 0.5. |

|

|

Input vector, fed into the first layer of the network. The number of elements must equal the number of neurons within the input layer. |

|

|

Left-to-right list of layers within the network. Must be at least two (one input and one output layer), but can be arbitrary large. More than four layers does not archive any improvement of the network capabilities, though. |

|

|

Momentum term parameter, should be between 0.5 and 0.9. |

|

|

Symbolic name of the network. Never used by the methods themselves, but convenient to use. |

|

|

Optimal tolerance parameter, should be between 0.0 and 0.2. |

|

|

Output vector, resulting from propagation through the entire network. The number of elements equals the number of output neurons within the network. |

|

|

Optimal expected output vector, used for the learning process. For simple propagation it is not needed. |

|

|

Weight decay parameter, should be between 0.005 and 0.03. |

1.3-rc3

1.3-rc3